What a difference a year makes. In 2020 the APS March Meeting was one of the first casualties of the worsening COVID-19 pandemic, with the event cancelled just hours before it was due to get under way. This year, like all other major scientific conferences, the March Meeting will be convened online – enabling physicists in all parts of the world to explore the latest research breakthroughs and technical innovations.

The APS is expecting more than 11,000 scientists and students to log on for the online event. The main draw will be the scientific programme, with parallel sessions running from Monday 15 March to Friday 19 March, which will be complemented by pre-meeting tutorials and short courses, a series of Industry Days, and events designed specifically for undergraduate students to present their research, learn about career options, and connect with the scientific community.

A virtual exhibit will run from Monday through to Thursday, with company representatives available to discuss their products from 12.00 p.m. to 3.00 p.m. (Central Time). A few of the new product innovations that will be presented at the exhibit are highlighted below.

Superconducting magnet system offers more efficient cooling

The Janis DryMag 1.5 K superconducting magnet system from Lake Shore Cryotronics offers a more cost-effective solution for low-temperature material research. Providing a continuous temperature range of 1.5 K to 420 K – even with the magnet operating at full field – the cryogen-free system now enables an easy shift between in-plane and out-of-plane measurements by providing both a 2D vector field magnet configuration as well as 0–90° precision sample rotation.

With an initial cooldown time of less than 24 hours (with the 9 T magnet), the system offers accurate and simultaneous temperature control of the sample mount and the surrounding helium exchange gas. The sample is located in static helium exchange gas for efficient cooling, regardless of sample material or shape.

In addition, the system is available in optical and non-optical geometries, and with a sample in vacuum configuration. An optional electrical transport measurement package is available, which includes Lake Shore’s M91 FastHall measurement controller for much faster, more precise Hall measurements. This controller offers measurement times up to 100 times faster than typical Hall systems, particularly when measuring low-mobility materials.

For more information about the DryMag system, visit lakeshore.com/DryMag.

Integrated unit simplifies the generation of complex waveforms

The new Proteus RF arbitrary waveform generators/transceivers from Tabor Electronics allow complex pulse shapes and phases to be easily created up to 8 GHz, eliminating the need for cumbersome IQ modulator/oscillator set-ups. With an optional high-speed digitizer, the Proteus can be converted into an arbitrary waveform transceiver to provide closed-loop measurement capability within a single unit and programming environment.

A variety of interpolators, IQ modulators and numerically controlled oscillators are integrated into each channel, allowing complex RF signals to be generated directly from the Proteus instrument. This integrated approach eliminates the limitations of external IQ modulators and mixers, such as IQ mismatch and in-band carrier feed-through. Each channel is coherent and fully independent, making it possible to produce multichannel time/phase aligned signals, or signals of different frequencies and characteristics, from a single unit.

The Proteus RF AWG exploits a high-speed PCI express interface that provides up to 64 Gb/s of data transfer speed. It offers 16GS of memory to allow even long and complex waveform sequences to be downloaded quickly, while reducing the waveform size through interpolation up to a factor of x8 makes it possible to save even more experimental set-up time. For applications requiring more memory or real-time changes to the waveform, the Proteus arbitrary waveform transceiver allows waveforms to be streamed from disk to instrument at speeds of up to 6GS/s.

Three form-factor versions are available to suit the needs of the experiment: the modular PXIe format offers unlimited time-aligned channels with the fastest data transfer rates, while the desk and bench versions offer up to 12 fully independent channels.

Integrated platform delivers quantum control

New from Quantum Machines (QM) is the Quantum Orchestration Platform (QOP), a complete hardware and software solution that allows even the most complex quantum algorithms to be run on any quantum processor. Unlike classical computation, where the computer logic is embedded within the processor itself, quantum processors have no built-in logic. Logic operations are instead performed by sending high-frequency pulses to the quantum processor from tailor-made classical hardware.

To address this challenge, QM has developed an integrated solution for controlling quantum systems. At the core of the solution is the OPX, the hardware element of the QOP, which incorporates multiple waveform generators, digitizers and processing units that are all integrated on a single FPGA with a unique and scalable design. Inside the OPX is a dedicated pulse processor that allows for advanced multi-qubit manipulation, quantum error correction, and full system scaling.

The OPX is designed to be easily programmed using QUA, a powerful yet intuitive programming language, which enables the most complex experiments and algorithms to be run quickly and easily. With seamless compatibility and powerful capabilities such as ultra-low feedback latency and general control flow, the platform delivers real-time processing to speed up experiments by as much as an order of magnitude.

Founded by leading quantum researchers, Quantum Machines partners with development teams at the forefront of quantum computing, including multinational corporations, start-ups, government laboratories, and academic institutions, to help advance the future of quantum computing.

Novel AFM mode enables electrochemical mapping for battery research

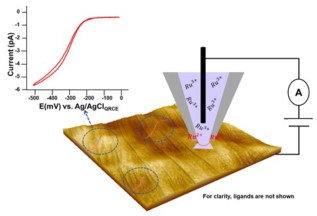

The NX range of atomic force microscopes (AFMs) from Park Systems now supports scanning electrochemical cell microscopy (SECCM), a new pipette-based nanoelectrochemical scanning probe technique for investigating the local electrochemical properties of electrode surfaces. For the first time this technique is allowing scientists studying electrocatalysis and energy storage to correlate electrochemical activity with the nanostructure of electrochemical interfaces.

SECCM works by inserting a quasi-reference counter electrode (QRCE) into a nanopipette filled with an electroactive species. The Z-scanner of the AFM is used to lower the nanopipette onto the contact surface, creating a meniscus that allows a tiny droplet, or nanoelectrochemical cell, to form. The electroactive species in the droplet reacts when a bias is applied between the QRCE and an electrode placed on the XY scanner, making it possible to create a electrochemical current mapping at various positions across the sample surface.

SECCM allows researchers to perform thousands of confined nanoelectrochemical measurements on a single surface, with droplet sizes ranging from a few hundred nanometers to a few microns. Researchers can easily alter the chemical systems by swapping a new pipette with another electroactive species, and there is little need for special preparation of samples – making the technique both simple and cost-effective.

In one recent study, the SECCM mode of the Park NX12 system was used to study an electrochemically reversible redox process at a highly ordered surface of pyrolytic graphite (HOPG). Localized nanoscopic cyclic voltammetry measurements were taken each time the meniscus touched the surface, providing a spatially-resolved surface electroactivity mapping of HOPG at the micro- and nanoscale.

The results indicate that the results are robust and reproducible, with a current limit as low as a few picoAmpere. According to application scientists at Park Systems, this capability could also facilitate the rational design of functional electromaterials for use in energy storage studies and for corrosion research.

Cryostat supports quantum computing scale-up

Oxford Instruments NanoScience will be showcasing its latest innovation in cryogen-free dilution refrigerator technology for quantum computing scale-up, the ProteoxLX, at the APS March Meeting. The ProteoxLX is part of Oxford Instruments’ family of next-generation dilution refrigerators, which all share the same modular layout to provide cross-compatibility and added flexibility for cryogenic installations.

Optimized for scaling up quantum computing systems, the LX system supports maximum qubit counts, with a large sample space and ample coaxial wiring capacity. Low vibration features reduce noise and support long qubit coherence times, while the system provides full integration of signal conditioning components.

The LX also offers two fully customizable secondary inserts for an optimized layout of cold electronics, as well high-capacity input and output lines that are fully compatible and interchangeable across the Proteox family.

Assoc. Prof. Bora Tas is director and head of MR-Linac (Unity) & Linac Business Lines at Elekta in Istanbul, Turkey. He has more than 10 years of clinical experience managing the physics and dosimetry for a facility with a high-volume, hospital-based radiation oncology department. He is skilled in X-rays, electrons, MRI, particle therapy, medical devices, dosimetry, and oncology management, as well as having lots of experience of working with VMAT, IMRT, SRS and SBRT techniques. He is an editorial board member and reviewer of scientific journals.

Assoc. Prof. Bora Tas is director and head of MR-Linac (Unity) & Linac Business Lines at Elekta in Istanbul, Turkey. He has more than 10 years of clinical experience managing the physics and dosimetry for a facility with a high-volume, hospital-based radiation oncology department. He is skilled in X-rays, electrons, MRI, particle therapy, medical devices, dosimetry, and oncology management, as well as having lots of experience of working with VMAT, IMRT, SRS and SBRT techniques. He is an editorial board member and reviewer of scientific journals.