Scientists in the UK have developed a new technique that uses light to locate objects deep within biological tissue and which could help physicians better diagnose lung diseases. Implemented without bulky equipment and in the glare of fluorescent lighting, the technique involves precisely measuring how long it takes single photons to leave the body after being sent down a fibre-optic extension of an endoscope.

The research has been carried out as part of the Proteus project, in which more than 40 scientists from three different universities – Edinburgh, Bath and Heriot-Watt – are working together to better observe bacteria in lungs. Doctors look inside lungs using endoscopes – long narrow tubes that they insert into the lung’s airways and which they guide using a lensed camera built into the device. However, as Michael Tanner of Heriot-Watt explains, endoscopes are usually more than a centimetre in diameter, which means they cannot get through the smaller airways and into the inner lung where bacteria grow rapidly.

Getting access to this area involves pushing millimetre-diameter bundles of optical fibres down the centre of the endoscope and then out of the far end. But even though these fibres can take images of the inner lung, there is no way to gauge exactly where they end up and therefore where the imaged bacteria are. As Tanner puts it, medics rely either on “expert practice or pot luck”.

Multiple scattering

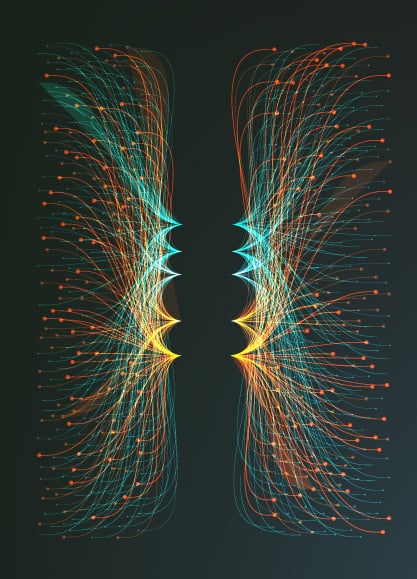

The solution devised by Tanner and colleagues is in principle very straightforward. It involves simply sending additional pulses of light down the fibre and then observing where they leave the body. Light is heavily absorbed when passing through biological tissue, while the photons that do make it out are usually scattered many times, meaning they lose much of the information about their point of origin. But there is a small chance that even over long distances any given photon will pass straight through with very little scattering.

Because these essentially “ballistic” photons travel in a straight line they not only reveal where they come from – the fibre tip – but they also emerge from the body ahead of all the other photons. So the trick in establishing the tip’s location is to time the arrival of the photons so precisely that the ballistic ones can be isolated from the rest.

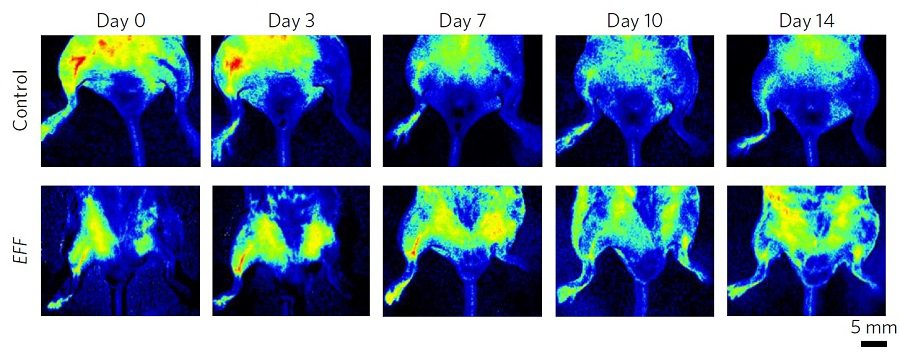

To implement their scheme, Tanner and co-workers sent a series of very short near-infrared laser pulses (pulse frequency: 80 MHz) through a length of fibre-optic cable inserted into a range of biological samples. They captured the emerging light using an array of single-photon detectors having a temporal resolution of around a tenth of a nanosecond. And they chose the light’s wavelength – 785 nm – to both limit absorption and distinguish the very weak signal from hospital fluorescent lighting, which has a number of well-defined spectral peaks in the visible range.

Early arrivals

Because each laser pulse results in very few ballistic photons leaving the tissue, the researchers had to build up histograms from multiple pulses to establish exactly which detectors had snared the earliest arriving particles (and therefore where the fibre tip was). Using exposure times of up to 17 s, they had no problem doing this when burying the fibre tip inside a ventilated sheep’s lung or behind a human hand. But they could only gather limited statistics when placing it underneath a 25 cm-thick human torso, and to pin down the fibre-tip location in this case they had to turn down the background lighting.

One snag with the latest work was the inability to independently confirm the location of the fibre tip. Although the researchers tied down the ballistic photons to just one or two possible pixels – equating to a spatial resolution of about a centimetre – Tanner says it is conceivable, although unlikely, that the photons had, for example, bounced off an air pocket and were therefore not travelling direct from the tip. To remove any doubts, in future they plan to use tissue phantoms that can be dissected after use to reveal the true location of the tip.

The group also aims to reduce the exposure time to a second or less, even for thick samples. This would allow the fibre tips to be located in real time – so enabling a clinician to overlay that position on, say, an X-ray image of a lung. Doing so, explains Tanner, could involve adding optics to fibre tips or upping the density of detector elements. As he points out, laser power, and therefore signal strength, is limited by safety considerations.

Key-hole surgery

Ultimately, adds Tanner, the group hopes to apply the new technology more broadly. Being relatively simple and compact – the prototype camera sitting in a box about the size of a biscuit tin and mounted on a tripod – he reckons that the technology could in principle be applied to all medical procedures in which instruments are inserted into the body, such as key-hole surgery and interventions requiring catheters. “Sometimes medics aren’t sure whether a catheter has gone the right way up a vessel and so need to use X-rays, which may cause delay,” he says. “Real-time imaging would be very useful.”

Hervé Rigneault, a physicist at the Institut Fresnel in France, points out that the latest technique is not the only one that could be used to probe deep into the lung. Among the alternatives, he says, is photo-acoustics, which creates biomedical images from sound waves generated by laser heating. “But,” he adds, “this is a nice piece of work that brings another possible imaging modality.”

The research is described in Biomedical Optics Express.